The Illusion of Reality: Welcome to the Age of Synthetic Media

For centuries, the adage “seeing is believing” served as a foundational pillar of truth. Photographic and video evidence held undeniable sway in courts, newsrooms, and personal interactions. Today, that pillar is crumbling under the relentless assault of generative AI. We have entered the age of synthetic media, where sophisticated algorithms can fabricate hyper-realistic images, videos, and voices that are indistinguishable from genuine recordings. This technological marvel, known as deepfakes, is not merely a tool for entertainment or social media filters; it is a profound threat to our perception of reality, our personal security, and the very fabric of societal trust.

- The Illusion of Reality: Welcome to the Age of Synthetic Media

- The Genesis of Deception: How Deepfakes Are Made

- The Devastating Impact: Deepfakes as Weapons

- The Digital Arms Race: Detecting and Countering Deepfakes

- The Future of Reality: Living with Synthetic Media

- Conclusion: Rebuilding Trust in a Post-Truth World

The speed and accessibility of deepfake technology are escalating at an alarming rate. What once required Hollywood-level visual effects studios can now be achieved on a smartphone with minimal effort. This democratized power has unleashed a torrent of malicious applications, from sophisticated financial fraud and political disinformation campaigns to insidious forms of cyberstalking and identity theft. This article delves into the unsettling truth of deepfakes: their creation, their devastating impact across industries, and the urgent, complex battle being waged by cybersecurity experts and digital forensics specialists to reclaim objective truth in a world where everything we see and hear can be manufactured. The fundamental question is no longer can we trust our senses, but how do we rebuild trust in a reality that is increasingly artificial?

The Genesis of Deception: How Deepfakes Are Made

Understanding how deepfakes are created is the first step in recognizing their danger. At their core, deepfakes leverage advanced artificial intelligence, primarily a class of algorithms known as Generative Adversarial Networks (GANs), coupled with vast datasets of real media.

Generative Adversarial Networks (GANs): The AI Forgery Machine

GANs operate on a fascinating, competitive principle involving two neural networks:

- The Generator: This network’s job is to create new, synthetic data (e.g., a fake image or a fake voice). It starts from random noise and tries to produce something that looks or sounds real.

- The Discriminator: This network’s job is to act as a detective. It is trained on real data and tasked with distinguishing between genuine content and the content created by the Generator.

These two networks are trained in opposition. The Generator continuously tries to fool the Discriminator, and the Discriminator continuously gets better at spotting fakes. Through this iterative process, both networks improve, leading the Generator to produce increasingly convincing synthetic media that can eventually fool human observers.

The Data Fuel: From Text to Voice to Video

The raw material for deepfakes is vast amounts of real data. The more data an AI has, the more convincing its output.

- Voice Deepfakes (Voice Cloning): To clone a voice, an AI needs just a few seconds, sometimes even a single minute, of audio of the target person speaking. This audio is used to train a voice synthesis model that can then generate new speech in the cloned voice, speaking any desired text. Think of it as a highly advanced text-to-speech system that perfectly mimics a specific human voice, including intonation, accent, and emotional cadence. This technology is becoming so sophisticated that companies are using it for virtual assistants and personalized audiobook narration. However, its darker side includes its use in vishing (voice phishing) attacks.

- Video Deepfakes (Face Swapping/Lip Syncing): Video deepfakes are more complex but equally accessible. They typically involve:

- Face Swapping: Replacing one person’s face in a video with another’s. This requires a dataset of images/videos of the target person’s face.

- Lip Syncing: Manipulating a person’s mouth in a video to match a new audio track. This is often used to make it appear as if someone is saying words they never uttered.

Accessibility: The Democratization of Deception

What was once confined to specialized labs is now ubiquitous. Free online tools, smartphone apps (like Reface or FaceApp), and open-source libraries (like DeepFaceLab) have made deepfake creation accessible to virtually anyone with a computer and an internet connection. This democratization is a double-edged sword: enabling creative expression but also fueling a rapid proliferation of malicious content, making it a critical cybersecurity threat.

The Devastating Impact: Deepfakes as Weapons

The implications of deepfake technology extend far beyond simple pranks. They are actively being weaponized to perpetrate fraud, sow political discord, and inflict severe personal harm across various sectors.

1. Financial Fraud and Business Email Compromise (BEC)

Deepfakes have added a terrifying new dimension to financial crime.

- The “Vishing” Epidemic: Voice deepfakes are being used in sophisticated vishing attacks. Imagine receiving a call from what sounds exactly like your CEO, demanding an urgent wire transfer to a new account. In 2023, a finance worker in the UAE was tricked into transferring $35 million after receiving a deepfake voice call from someone impersonating their company director. This type of incident is becoming increasingly common, with global losses due to such sophisticated social engineering rising dramatically year over year.

- Business Email Compromise (BEC) Evolution: BEC scams, which traditionally rely on fake emails, are now being augmented with deepfake audio and video. A scammer might send a fraudulent email and then follow up with a deepfake video call, impersonating a senior executive to pressure the victim into complying. Cybersecurity firms report that deepfake-enabled BEC attempts are a growing concern for enterprises.

2. Disinformation and Political Destabilization

Deepfakes pose an existential threat to democracy and public trust, especially during electoral cycles.

- Political Campaigns: Fabricated videos of politicians making controversial statements, or audio clips of candidates appearing to confess to misdeeds, can spread like wildfire on social media, influencing public opinion and eroding trust in legitimate news sources. The potential for a deepfake to go viral and sway an election outcome in a critical moment is a profound disinformation risk that governments and election security experts are grappling with globally.

- Geopolitical Conflict: Deepfakes can be deployed by state-sponsored actors to create fabricated evidence of atrocities, incite violence, or escalate international tensions. The ability to create convincing, yet entirely fake, narratives has massive implications for global stability and misinformation management.

3. Personal Harm: Identity Theft, Extortion, and Reputation Damage

At a personal level, deepfakes can inflict profound and lasting damage.

- Identity Theft and Biometric Security Risks: If a deepfake of your face or voice can unlock a device or pass a biometric verification, it creates a critical vulnerability for identity theft. While many biometric security systems are becoming more robust against synthetic media, the arms race between deepfake creators and detection systems is constant.

- Non-Consensual Deepfake Pornography: A significant and deeply harmful application of deepfake technology is the creation of non-consensual pornography, where individuals’ faces are superimposed onto explicit content. This constitutes a severe violation of privacy, often leading to severe psychological distress and reputational ruin for victims. Legal frameworks globally are struggling to keep pace with this rapidly evolving form of digital abuse, making it a major concern for AI ethics and online safety advocates.

- Reputation Damage and Cyberstalking: Deepfakes can be used to fabricate embarrassing or damaging content, spreading false narratives about individuals, leading to professional and personal ruin. This form of cyberstalking can be incredibly difficult to combat, as the fake content can quickly spread beyond the victim’s control.

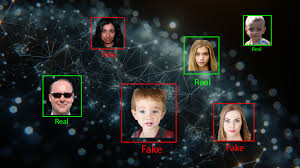

The Digital Arms Race: Detecting and Countering Deepfakes

The fight against deepfakes is a high-stakes technological arms race. As deepfake creation tools become more sophisticated, so too must the digital forensics and AI security tools designed to detect them.

1. AI-Powered Detection Tools

The most effective way to fight AI-generated fakes is often with more AI.

- Forensic Analysis: Researchers are developing AI models that can analyze subtle imperfections in deepfakes that are imperceptible to the human eye. These might include inconsistent blinking patterns, unnatural head movements, subtle light reflections in the eyes, or specific digital artifacts left by the GAN training process.

- Biometric Liveness Detection: Systems are being developed to detect “liveness” for biometric authentication, differentiating between a real human face/voice and a deepfake. This might involve tracking micro-expressions, blood flow under the skin (pulsating light changes), or subtle variations in speech patterns unique to human biology. Companies like FacePhi are developing advanced biometric authentication solutions to counter deepfake attacks.

- Watermarking and Provenance: A promising future solution involves embedding invisible digital watermarks into legitimate media at the point of capture. This would allow automated systems to verify the origin and authenticity of content, similar to a digital certificate of authenticity. The Content Authenticity Initiative (CAI), backed by Adobe, Microsoft, and others, is actively working on standards for content provenance to combat disinformation.

2. Regulatory and Legal Responses

Governments and international bodies are grappling with how to regulate deepfake technology without stifling innovation.

- Legislation and Accountability: Countries are beginning to pass laws specifically targeting malicious deepfakes, particularly non-consensual pornography and election interference. The EU’s AI Act, for example, is set to mandate clear labeling for AI-generated content, forcing creators to disclose when media is synthetic [[Source 1.1]]. This proactive legislative approach is crucial for establishing accountability.

- Platform Responsibility: Social media companies and tech platforms are under increasing pressure to implement robust deepfake detection and removal policies. This involves significant investment in moderation tools and partnerships with fact-checking organizations to quickly identify and label or remove harmful content.

3. Public Education and Media Literacy

Ultimately, technology alone cannot solve the deepfake problem. Human vigilance and critical thinking are paramount.

- Critical Thinking Skills: Educating the public on how to identify red flags in suspicious media (e.g., unnatural movements, strange audio artifacts, inconsistent lighting) is vital. Promoting media literacy empowers individuals to question what they see and hear online.

- “Trust No One” Protocol: Encouraging a “zero-trust” mindset when encountering unexpected or emotionally charged digital content, especially in financial or sensitive contexts, is becoming a necessary personal cybersecurity best practice. This involves verifying information through independent, established channels.

The Future of Reality: Living with Synthetic Media

The proliferation of deepfakes marks a fundamental shift in our relationship with information. The ability to create convincing fakes at scale means that objective truth, as historically understood, is under constant siege. This is not a temporary challenge; it is the new normal.

The Inevitable Integration and the Ethical Dilemma

Deepfakes will not disappear; they will become even more sophisticated and integrated into everyday life. From personalized marketing and virtual influencers to enhanced online education and even therapeutic applications (e.g., creating virtual personas of deceased loved ones for grieving processes), the benign uses of synthetic media are vast.

The ethical challenge lies in drawing clear lines between harmless creative expression and malicious deception. The debate around AI ethics and governance will intensify as these technologies become indistinguishable from reality, forcing societies to redefine what constitutes authentic communication.

The Content Authenticity Initiative (CAI) and Digital Signatures

Initiatives like the Content Authenticity Initiative (CAI) are working on an industry standard for digital “nutrition labels” for media. This involves embedding cryptographically secure metadata into photos, videos, and audio files at the point of capture, indicating who created the content, where it was created, and if it has been altered. This digital signature approach is our best hope for providing verifiable provenance in an increasingly synthetic world, allowing consumers and platforms to make informed decisions about the media they consume. However, widespread adoption across all devices and platforms is a monumental undertaking.

Daily Deepfake Landscape (Q4 2025 Trends)

- Deepfake Production Surge: A recent report by DeepMedia indicates a 700% increase in deepfake video production in 2024 compared to 2023, with a significant portion being used for financial fraud and identity theft. This rapid increase highlights the escalating threat.

- Corporate Security Focus: Major corporations are now investing heavily in deepfake detection software and mandatory employee training programs to combat advanced social engineering attacks. This reflects a shift from reactive defense to proactive education.

- Legislative Momentum: The US Senate recently held hearings in late 2025 on the threat of AI-generated content in elections, indicating increasing legislative urgency globally to regulate this technology before major election cycles in 2026.

Conclusion: Rebuilding Trust in a Post-Truth World

The era where we could implicitly trust our eyes and ears is over. Deepfakes represent a technological leap that forces us to fundamentally re-evaluate how we consume information, verify authenticity, and protect our identities. The threat is pervasive, touching everything from the global financial system to the sanctity of personal reputation.

While the challenge is immense, it is not insurmountable. The combined efforts of AI security research, robust legal frameworks, proactive platform moderation, and widespread public education are critical in this ongoing digital arms race. The future demands a heightened sense of skepticism, a commitment to verifying sources, and a collective effort to rebuild trust in a world where reality itself can be manufactured. The question “Can you trust your eyes?” has never been more pertinent, and the answer increasingly depends on our collective ability to adapt, educate, and innovate faster than the purveyors of digital deception.